The terms “accuracy” and “precision” are often used interchangeably. However, in the field of instrumentation, these terms have different meanings. What about repeatability, stability, reproducibility?

What is the difference?

In baseball, it would look like this: The pitcher is on the mound. The batter stands ready at the plate. First pitch. Low and outside. “Ball one…” Second pitch. Low and outside. The batter fouls into the stands behind first base. “Strike one!” Third pitch. Low and outside! The batter gets a piece of it, and fouls it behind home plate. “Strike two!” Forth pitch. Low and outside. This time the batter connects with the ball, and sends it into the center field stands. Home Run!

The pitching coach is thinking, “Good control. Very precise. Very predictable. Not accurate… We need to work with this kid!”

My dad was in the scale and balance business for over forty years. He described accuracy and precision this way: “Precision is doing something the same way every time. Accuracy is doing the right thing every time. Accuracy with precision is doing the right thing, the same way, every time.”

If you weigh an object with a known mass of 10 grams, and the balance reads 8.5 grams, the balance is not accurate. If you weigh the same object five times, and the balance reads 8.5 grams each time, it is precise, but not accurate. If you weigh the object multiple times, and the average measurement is approximately 10 grams, but you get a different reading each time, you have accuracy without precision. If the instrument is inaccurate, imprecise or both, calibration and adjustment is required.

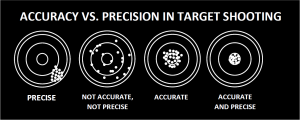

A simple visual demonstration of accuracy vs. precision is in target shooting.

Accuracy asks, “How close is the measured value to a known standard?” If the standard changes, anything compared to it will be inaccurate. Accuracy is the “correctness” of a quantity to a known standard.

Precision asks, “How close are two or more measurements (or two or more sets of measurements) to each other?” Precision includes: repeatability, reproducibility and stability. Repeatability is consistency of the measurement. Does it work the same way under the same conditions over and over again? Reproducibility is the ability of the instrument to produce the same result without adjustment, each time. Stability describes the ability of the instrument to remain constant over time. How resistant to drift will it be with changing factors such as temperature, flow, pressure, etc? Precision is independent of accuracy. You can be precise, but inaccurate.

In instrumentation calibration, known standards, like weights, buffers, gases should be stable. If not, the accuracy of your calibration and future measurements will be inaccurate. Weights require periodic re-certification. Buffers and gases have expiration dates, and require change. Electrical test equipment, flowmeters, pressure transmitters etc. require comparison to master meters in scheduled intervals. Once an initial calibration is made and confirmed, the instrument is accurate.

Precision is a function of the complete operating system. With balances, this includes operator, procedure, environment, connection to other instruments or computers. With flowmeters or pH systems, this includes additional factors like plumbing conditions: connections, pressure and flow-rate, temperature. As these factors change, the repeatability and reproducibility of the measurement can change, resulting in loss of precision.

For Dad, “doing the right thing” with balances and scales meant adjusting for accuracy and precision. Otherwise, they didn’t leave the shop. Do the right thing, every time. Thanks, Dad!